Machine Learning for Drug-Drug Interaction Prediction

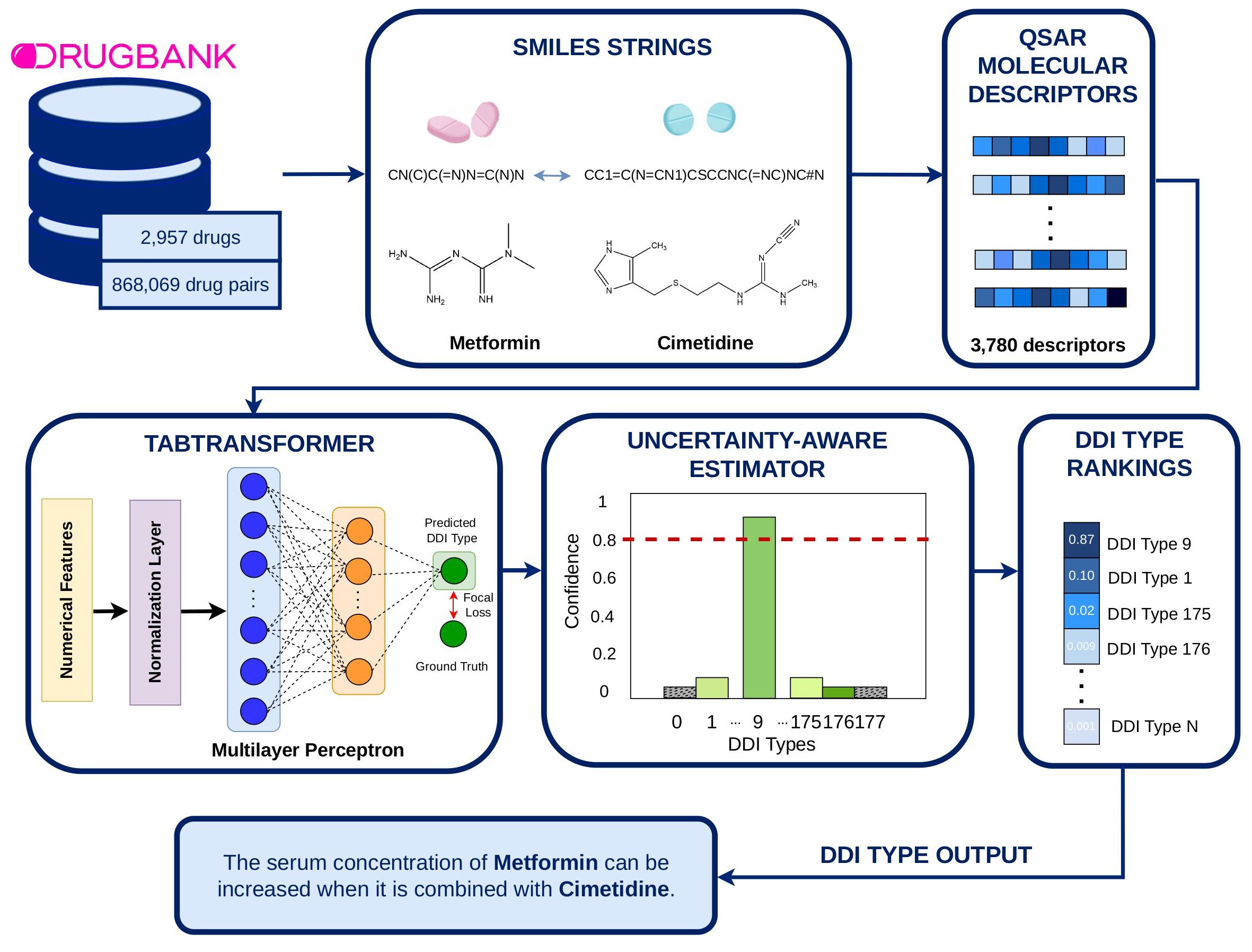

Purpose: Polypharmacy safety demands accurate drug–drug interaction (DDI) prediction, yet balancing model complexity with reliability remains challenging. We address the need for enhanced predictive trust and handling of data imbalance by re-evaluating feature representation strategies in neural architectures.

Methods: We introduce tDDI, an uncertainty-aware tabular transformer framework. Departing from purely learned embeddings, our approach leverages explicit physicochemical descriptors for robust signaling and integrates an uncertainty estimator to mitigate severe long-tail class imbalance in pharmacological data.

Results: We demonstrate that explicit descriptors outperform complex categorical embeddings in this context. tDDI significantly improves precision for rare adverse events and, in a prospective evaluation of five novel drugs approved by the FDA in late 2025, successfully identified clinically critical risks.

Conclusion: By synergizing quantitative explanations with natural language reasoning, tDDI advances clinical utility. Our findings suggest that integrating domain-specific descriptors with uncertainty estimation establishes a new standard for trustworthy DDI prediction, offering a robust complement to existing methodologies.

Try PredictionData Statistics

2,957

Unique Drugs

Distinct drugs in dataset

868,069

Drug Pairs

Interaction pairs analyzed

178

Interaction Types

Classification categories

3,780

Features

Molecular fingerprints

Evaluation Metrics

97.96%

Accuracy89.92%

F1-Score88.69%

Recall92.49

PrecisionMethodology

Feature Extraction

Drug features are extracted from SMILES representations to encode molecular structural information in a numerical format suitable for model input.

TabTransformer Model

TabTransformer with uncertainty estimation leveraging structured drug descriptors for DDI prediction.

LIME Explanations

Local interpretable explanations show which features drive each prediction.

Result Gallery

Visual results and analysis from our drug-drug interaction prediction research

Model Pipeline